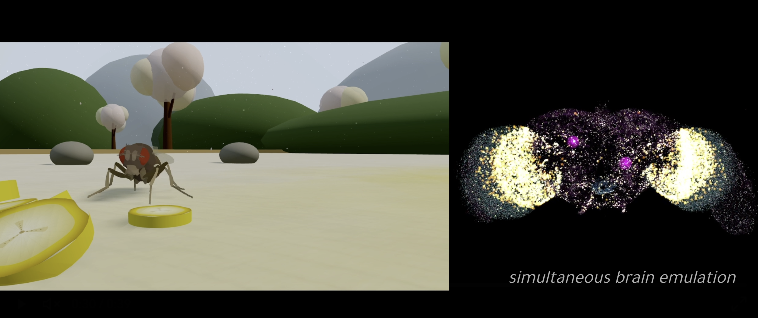

Imagine a tiny fruit fly buzzing around your kitchen. Now picture that same fly living entirely inside a computer, navigating a virtual world, grooming itself, and even hunting for food. That’s exactly what the Eon Team at Eon Systems has achieved with their virtual embodied fly. This groundbreaking project blends neuroscience and computer simulation to bring a digital insect to life. In this article, we’ll explore how they did it, why it matters, and what comes next.

First, let’s understand the big picture. The Eon Team wanted to show how a brain’s wiring diagram, called a connectome, can drive real behaviors in a virtual body. They focused on the fruit fly because scientists know a lot about its brain. Accordingly, this virtual embodied fly serves as a test bed for bigger ideas in brain emulation.

What Is Embodied Brain Emulation?

Embodied brain emulation means creating a digital copy of a brain and linking it to a simulated body. Unlike simple AI models that learn from data, this approach copies the brain’s structure directly. The Eon Team built on years of research to make it happen.

For instance, they used the adult fly connectome from a 2024 study by Dorkenwald and others. This map details how neurons connect in the fly’s brain. Additionally, they incorporated brain models from Lappalainen’s team, which predict how visual signals flow through neural circuits.

However, emulation goes beyond just the brain. You need a body that interacts with the world. That’s where embodiment comes in. The team connected the digital brain to a virtual fly body, allowing it to sense and move. As a result, the virtual embodied fly can respond to its environment in realistic ways.

Why Choose the Fruit Fly?

Fruit flies, or Drosophila, make perfect subjects for this work. Scientists have studied them for decades. Their brains are small but complex, with about 140,000 neurons. That’s manageable compared to a human brain’s billions.

Moreover, flies show interesting behaviors like foraging and grooming. These actions stem from specific neural pathways. For example, when a fly detects dust on its antennae, it triggers a cleaning routine. The Eon Team replicated this in their simulation.

Another key point is accessibility. Researchers have mapped the fly’s connectome in detail. They know neurotransmitter types and synapse strengths. Therefore, building a virtual version becomes feasible. In contrast, larger animals pose bigger challenges due to scale.

Building the Virtual Brain

The Eon Team started with a leaky integrate-and-fire model from Shiu’s 2024 research. This model simulates how neurons fire based on inputs. They based it on the fly’s central brain connectome, which includes 50 million connections.

To add realism, they included neurotransmitter signs. Some excite neurons, while others inhibit them. This setup helps recover sensorimotor structures for behaviors like feeding.

Furthermore, vision plays a big role. The team integrated Lappalainen’s visual model, which covers 64 cell types. It predicts activity in motion pathways. Then, they piped this data into the main brain model. As shown above, this creates a loop where visual cues influence actions.

However, the brain isn’t static. Neurons update every 15 milliseconds in the simulation. That pace allows for quick responses, though it’s slower than real time for some behaviors.

Creating the Virtual Body

No brain works alone. It needs a body to act on the world. The Eon Team used NeuroMechFly, a model from Wang-Chen’s 2024 paper. This digital fly has 87 joints, based on X-ray scans of a real insect.

They simulated it in MuJoCo, a physics engine that handles forces and contacts. Consequently, the virtual body moves naturally, with legs stepping and wings potentially flapping in future versions.

Sensory inputs make it lifelike. For taste, the model activates receptor neurons for sweet or bitter stimuli. Olfaction works similarly for smells. Touch senses dust, triggering grooming.

In addition, vision comes from simulated eyes. The brain processes these signals, deciding what to do next. This closed loop—from sense to act—brings the virtual embodied fly to life.

Connecting Brain and Body

Integration ties everything together. The Eon Team created interfaces between the digital brain and body. Descending neurons carry signals from brain to muscles.

For grooming, specific neurons activate foreleg movements. Steering uses others for turning left or right. Forward speed comes from yet another set.

They translated these neural firings into motor commands. Like driving a car, you don’t simulate every piston—just the controls. Similarly, the team used trained controllers to handle joint torques.

Nevertheless, this setup runs in real time. Every 15 ms, sensors update, brain computes, body moves, and feedback loops back. As a result, behaviors emerge naturally.

Behaviors in Action

Watch the virtual embodied fly in motion, and you’ll see impressive actions. It navigates toward food using taste cues. Invisible banana slices draw it in.

When dust builds up, it grooms its antennae with forelegs. This mimics real fly behavior, based on mechanosensory pathways.

Upon reaching food, it eats. Sugar on legs or proboscis triggers feeding motor programs. Meanwhile, it forages by exploring the arena.

Even escape responses appear. Looming threats activate neurons, though not fully implemented yet. Overall, these multi-behaviors show the model’s power.

For example, the fly slows and turns based on tastes. Bitter ones repel, sweet ones attract. Such details make the simulation convincing.

Challenges and Limitations

No project is perfect. The Eon Team acknowledges several issues. The brain model simplifies neuron dynamics. It lacks dendritic details and plasticity.

Moreover, internal states like hunger aren’t included. Neuromodulators, which adjust behavior, remain absent. Therefore, the fly doesn’t adapt over time.

Brain-body links are approximate. The team hand-picked mappings, but electrophysiology could refine them. Reinforcement learning might help too.

Additionally, the descending interface covers only a fraction of neurons. Flies have over 1,000 such cells, working in networks. Expanding this would add complexity.

Despite these hurdles, the virtual embodied fly demonstrates key concepts. It tests sensorimotor control without proving structure alone suffices.

Future Directions

Looking ahead, the Eon Team plans more. They aim to improve fidelity, adding biophysical details. Learning mechanisms could make the fly smarter.

Broader implications excite many. This work paves the way for emulating larger brains. Imagine virtual mice or even human-like systems someday.

However, ethical questions arise. What if simulations become conscious? We must think carefully.

Collaboration is key. Eon invites partners to build on this. Contact them to join the effort.

In the meantime, this project makes brain emulation concrete. It bridges neuroscience and AI in new ways.

Wrapping It Up

To sum up, the Eon Team’s virtual embodied fly marks a milestone in tech. By integrating connectomes, models, and simulations, they’ve created a digital creature that behaves like the real thing. While challenges remain, the potential is huge. As we move forward, there are countless opportunities in brain science and beyond. What will the future hold if we keep pushing boundaries?

Leave a Reply